x.com is a nightmare factory – what more will it take for people to leave?

Content warning: this post includes discussions of child abuse material, non-consensual sharing of intimate images, and violence against women.

If this raises anything for you:

- In Australia: call 1800-RESPECT or the Bravehearts support line on 1800 272 831.

- In the UK, call 0808 500 2222 or chat here.

- In Canada, call 1 888 933-9007 or chat here.

- To find local US support services, click here.

I used to love Twitter. For years it was the most-used app on my phone and pinned to the favourites bar of every laptop I used. I met some of my best friends on what we used to jokingly refer to as the hell-site.

I deleted my account in October of 2023, one year after the platform was acquired and rapidly made significantly worse by Elon Musk. I can’t recall the specific initiating event of that day, but I had been thinking of doing it since the deal went through.

Despite my confidence in the decision, it still sucked. For months afterwards I would think of things I wanted to tweet only to realise I had nowhere to put them. Without graphic design skills, Instagram isn’t made to receive short text-based quips (unless, ironically, they are screenshots of tweets). I lost contact with some of the people I used to follow. I rebuffed the requests to join Mastodon or Bluesky, telling my friends I was too old to learn a new platform, and it would probably be better for me to be less online (look at me now, reader). Importantly, I survived.

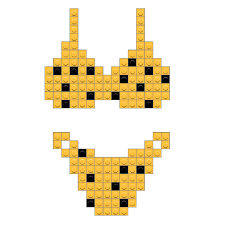

In the past few weeks, the “put her in a bikini” fiasco has been unfolding on the platform, with users directing the in-house LLM Grok to undress, “cover in donut glaze” or otherwise degrade the women in images they’re replying to on the site. Through image generation, rather than a physical act of violence, women refreshed their feeds to find a convincing image of themselves in just a bikini, covered in what looked like seminal fluid, or in some cases bound and gagged in the back of a car. They reported very real reactions of feeling violated, embarrassed, and scared for their safety.

Violence against women has always been a cornerstone of the internet. The sexualised kind even more so. But not at this scale. Not with a free tool accessible on one of the biggest social media platforms to turn any bored degenerate into someone that could ‘create’ a pornographic image, instantly, of any person they had a photo of.

This is bad enough, but there was a further subterranean level to the depravity. Not all of those targeted were women. Some were children.

Before this trend exploded, 2025 was already the worst year on record for online Child Sexual Abuse Material (CSAM), fuelled in part by image generators. The Internet Watch Foundation (IWF), an NGO working to remove CSAM from the internet, reported a terrifying “26,362% rise in photo-realistic AI videos of child sexual abuse, often including real and recognisable child victims.” 65% of the AI generated material reviewed by IWF was Category A, the most serious type of abuse material.

This content is for Paid Members

Unlock full access to make obsolete after reading and see the entire library of members-only content.

SubscribeAlready have an account? Log in